Alibaba Cloud Model Studio node added

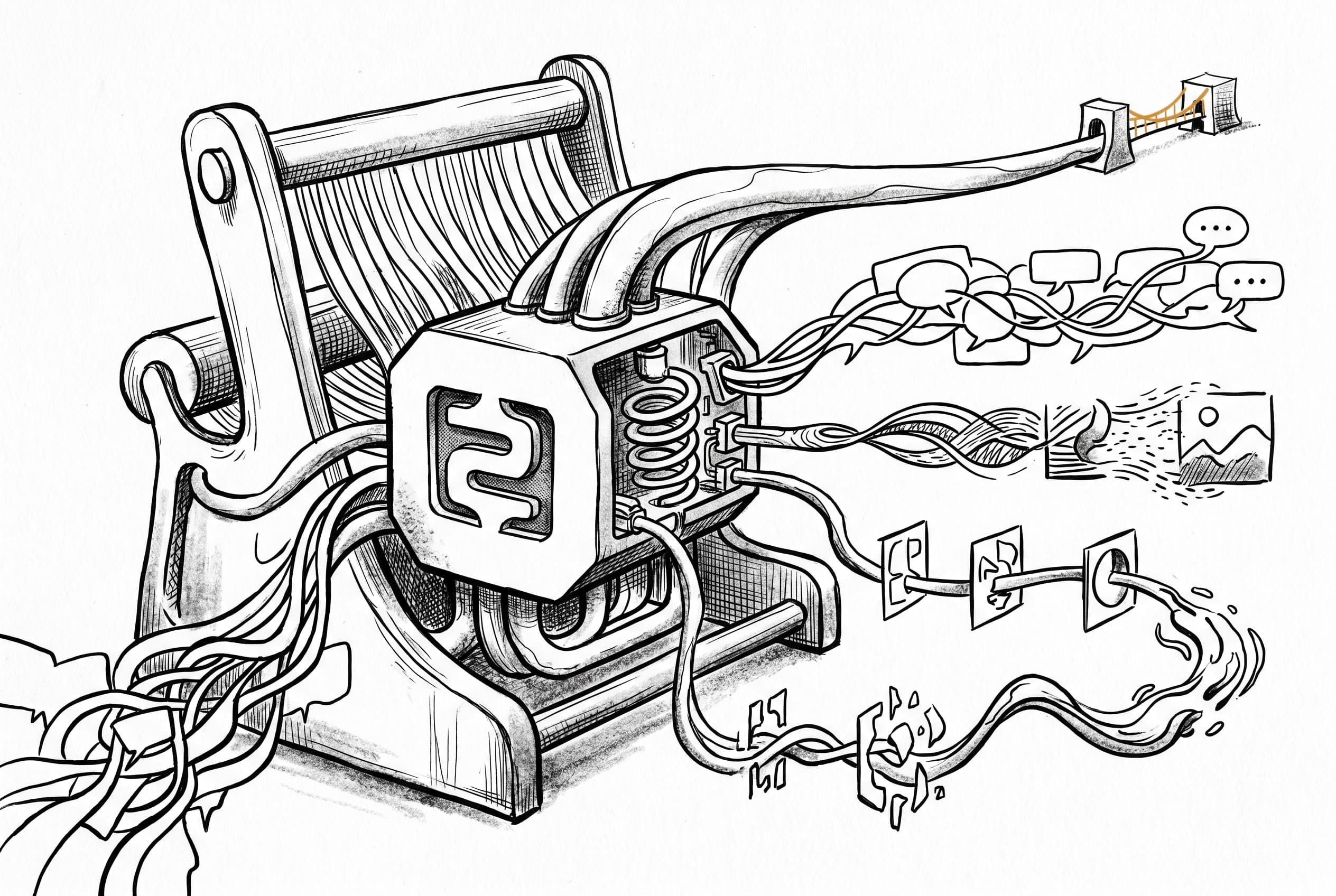

n8n now includes native support for Alibaba Cloud's Model Studio, letting workflows chat with Qwen models, generate images, and create videos.

n8n workflows can now interact directly with Alibaba Cloud's Model Studio API. A new vendor node brings three operation types: text chat completions with Qwen models, image analysis and generation, and video creation from text or existing images. The text operation supports tool and function calling, allowing Qwen models to integrate with n8n's AI Agent system. Generated images download automatically as binary data, while video generation uses asynchronous polling with automatic retry logic. The node follows the established vendor pattern in the langchain package, using the shared AlibabaCloudApi credential with regional endpoints including Frankfurt workspace support. Model lists are currently static, though the architecture supports dynamic loading via the OpenAI-compatible models endpoint in a future update.

View Original GitHub Description

Summary

Add the Alibaba Cloud Model Studio standalone node, based on community PR #27814 by @dmolenaars, integrated into core with review feedback applied.

Features

- Text: Chat completions with Qwen models (Qwen3 Max, Qwen3.5 Plus/Flash, MoE variants). Supports multi-turn conversations, web search, and configurable parameters. Includes tool connector for AI Agent integration.

- Image: Vision-language analysis (Qwen-VL), text-to-image generation with auto-download (Z-Image Turbo, Wan 2.6 T2I, Qwen Image).

- Video: Text-to-video and image-to-video generation (Wan 2.6 T2V/I2V) with async polling, plus video download.

Architecture notes

- Model lists are currently static, matching the original community PR. The DashScope native API does not expose a unified

/modelsendpoint filtered by capability. However, the OpenAI-compatible API (/compatible-mode/v1/models) is available and can be used in a follow-up to switch to dynamic model loading — this is fully backward compatible since the parameter name (modelId) and type (options) won't change. - Telemetry: The text model parameter uses

modelId(matching OpenAI/Gemini/Anthropic convention) and the node is registered inAI_VENDOR_NODE_TYPESfor analytics tracking.

Changes from community PR

- Relocated node from

nodes-basetonodes-langchain/nodes/vendors/(matching OpenAI, Gemini, Anthropic pattern) - Unified credential to shared

AlibabaCloudApiinnodes-langchain/credentials/(matching PR #27882's pattern) with region selector and workspace ID for Frankfurt - Fixed codex identifier, removed unused

requestDefaultsandroutingblocks - Renamed snake_case parameter names to camelCase per n8n conventions

- Replaced

throw new Error()withNodeOperationError - Removed

extends IDataObjectfrom request body interface - Set

usableAsTool: true, human-readable model display names - Extracted

versionDescription.tsfollowing vendor node pattern - Added dynamic

inputsfor Tools connector on text/message operation - Auto-download generated images as binary data (matching Gemini behavior)

- Removed redundant Download Image operation (generate now handles it)

- Renamed text model parameter to

modelIdfor telemetry compatibility - Added Frankfurt workspace ID validation with

UserError - Unified

simplifyOutputandpairedItemformats across all operations - Added node type constant to

AI_VENDOR_NODE_TYPESfor telemetry

Related Linear tickets, Github issues, and Community forum posts

https://linear.app/n8n/issue/NODE-4759

Based on community contribution: https://github.com/n8n-io/n8n/pull/27814

Review / Merge checklist

- PR title and summary are descriptive. (conventions)

- Docs updated or follow-up ticket created.

- Tests included.

- PR Labeled with

release/backport(if the PR is an urgent fix that needs to be backported)