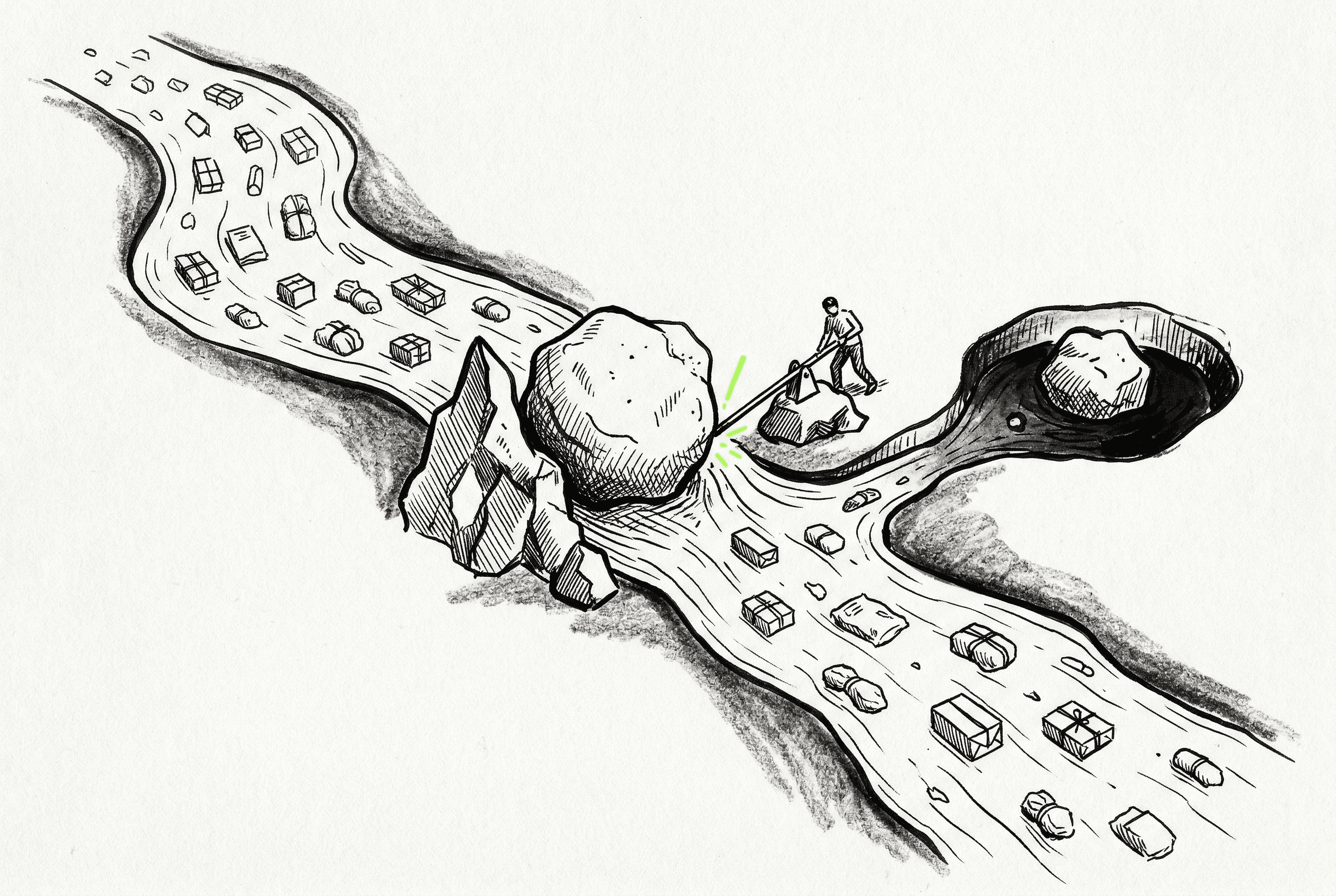

Large batches containing oversized payloads can now be processed without aborting the entire operation. Previously, the batch was halted and exponential backoff retries were triggered when a single oversized item caused a stream parsing error.

Items that exceed the maximum size limit are now identified and processed as individually failed runs. The remnants of chunked oversized lines are correctly discarded, which prevents parsing errors on subsequent items in the stream.

Raw byte streams are scanned to extract necessary routing metadata from oversized items without loading them fully into memory. These oversized items are then gracefully routed to a pre-failed state, and the details page is updated to display the status and ID of these runs clearly.