Developers can now filter errors by version, snooze them with custom thresholds, and configure smart alerts via Slack, email, or webhook to notify them only when new issues emerge or old ones regress.

“We added time-to-live limits so your overloaded queues can quietly abandon tasks instead of executing them.”

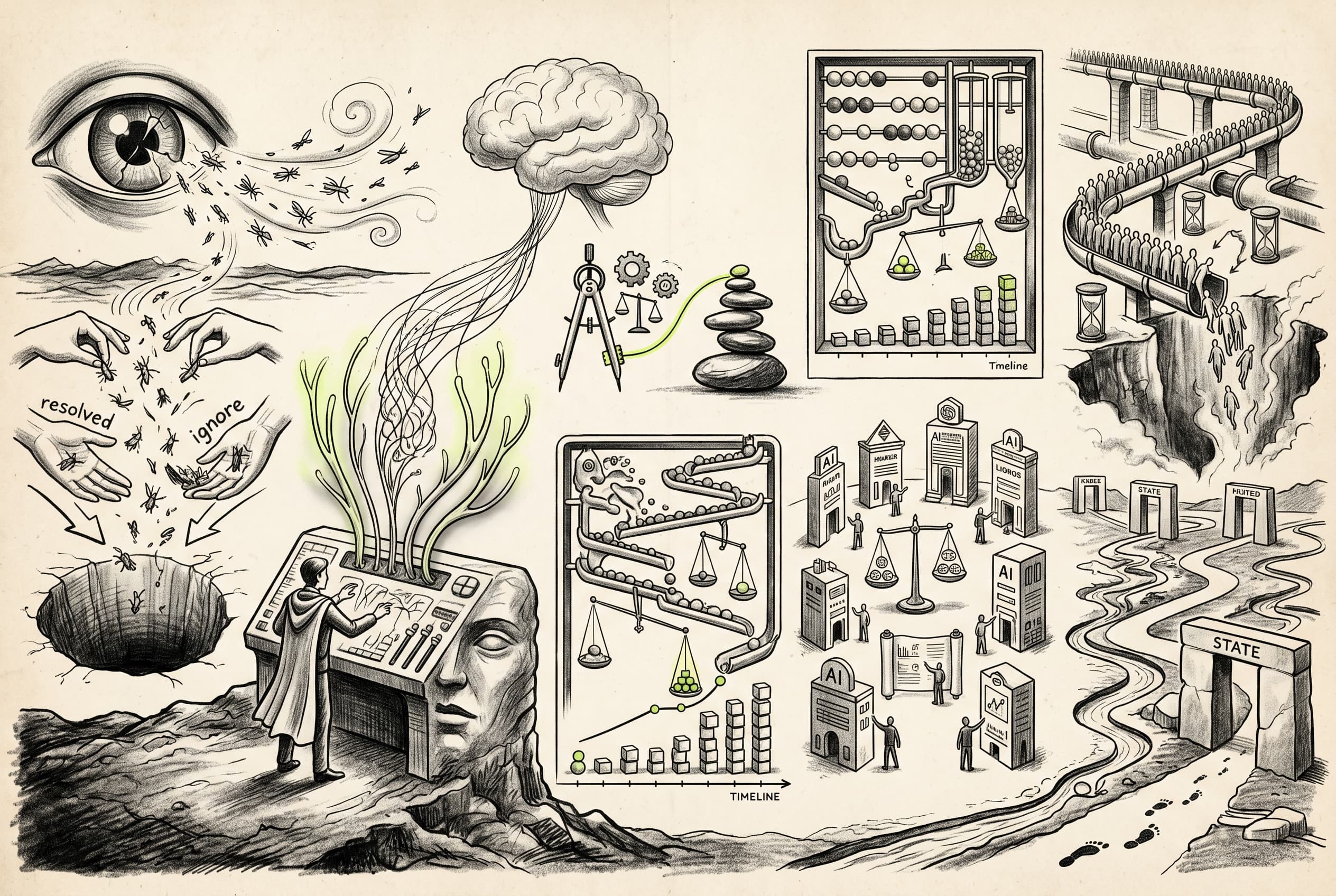

Managing artificial intelligence behavior no longer requires deploying code. Developers can now edit, version, and override AI prompts entirely from the web dashboard. A new inspector pairs these calls with automated cost tracking across over 145 models, allowing teams to instantly see the financial impact of their text generation per run.

Background task queues have been updated to handle congestion with more grace. Administrators can configure time-to-live expiration rules so stale jobs simply vanish from backed-up queues instead of firing late. For enterprise deployments, task traffic can now be routed directly to private AWS networks, keeping sensitive databases off the public internet.

Developers can now define AI prompts in code with `prompts.define()`, then manage versions and overrides from the dashboard without redeploying, with rich AI span inspectors showing prompt context and metrics in run traces.

Developers can now automatically track LLM costs across 145+ models directly in their traces, with a new inspector showing tokens, pricing, messages, and tool calls right in the span details.

- 4Users can now browse AI models in a catalog, view detailed metrics per model, and compare performance across multiple models side-by-side. The feature includes a new sidebar section under AI with feature flag controls.feature

- 5The RunEngine documentation has been refreshed with a massive ASCII state diagram, updated terminology for regions, and the new token-based wait API.docs