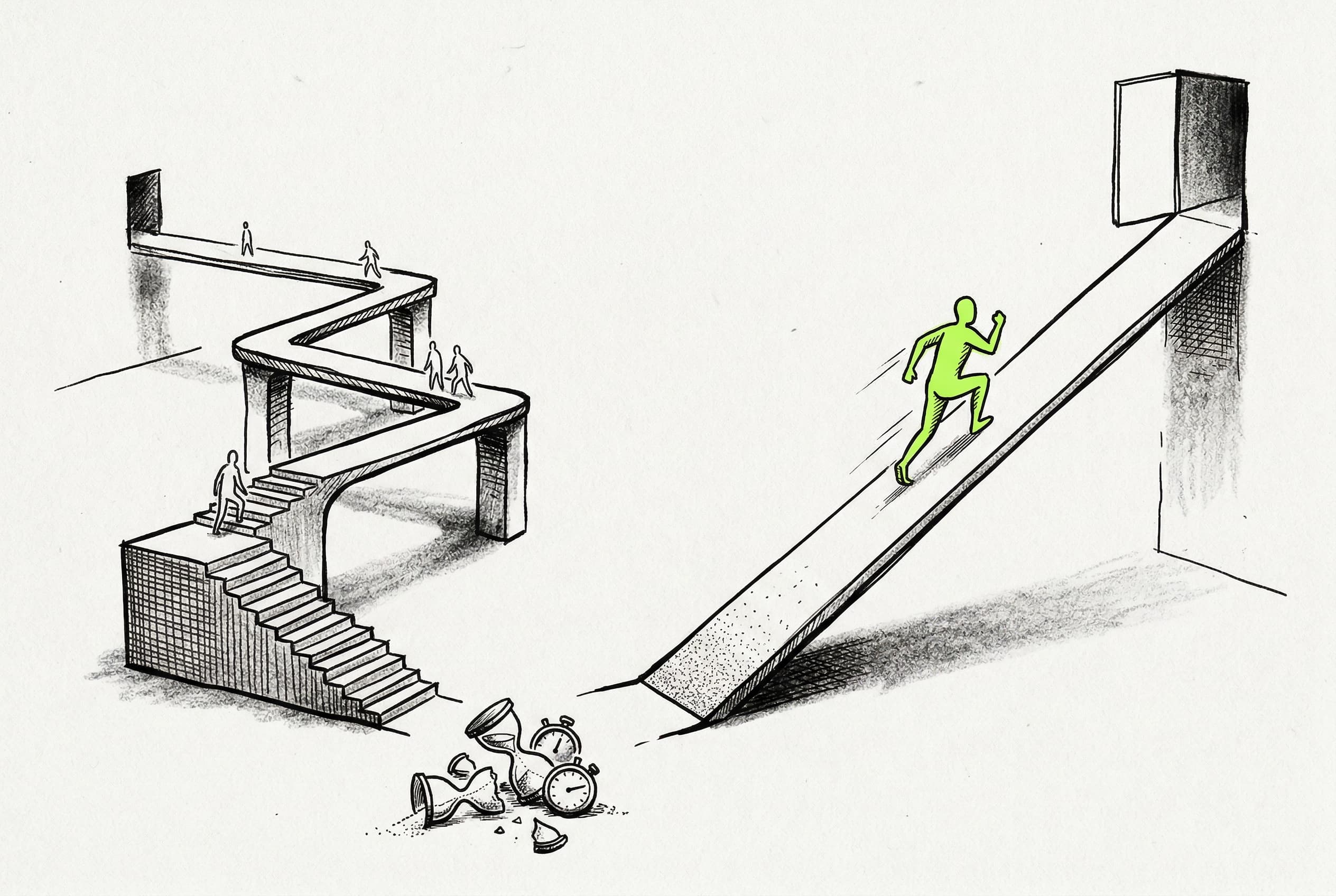

Background jobs can now execute instantly without queue latency

Triggered runs skip the 500ms debounce delay and execute immediately when concurrency is available, drastically reducing start latency.

Triggered runs previously sat in a queue for at least 500ms before a worker could pick them up, even when the system was completely idle.

The engine now includes a fast path that skips the intermediate queue entirely. When a new run is triggered, the system atomically checks if workers are available and concurrency limits allow it. If the coast is clear, the job goes straight to the worker.

This eliminates the artificial debounce latency, meaning jobs start executing practically the millisecond they are triggered. This capability is rolling out in the run engine, gated per-region in production and enabled by default in local development.

View Original GitHub Description

Summary

Currently, every triggered run follows a two-step path through Redis:

- Enqueue — A Lua script atomically adds the message to a queue sorted set (ordered by priority-adjusted timestamp)

- Dequeue — A debounced

processQueueForWorkerQueuejob fires ~500ms later, checks concurrency limits, removes the message from the sorted set, and pushes it to a worker queue (Redis list) where workers pick it up viaBLPOP

This means every run pays at least ~500ms of latency between being triggered and being available for a worker to execute, even when the queue is empty and concurrency is wide open.

What changed

The enqueue Lua scripts now atomically decide whether to skip the queue sorted set entirely and push directly to the worker queue. This happens inside the same Lua script that handles normal enqueue, so the decision is atomic with respect to concurrency bookkeeping.

A run takes the fast path when all of these are true:

- Fast path is enabled for this worker queue (gated per

WorkerInstanceGroup) - No available messages in the queue (

ZRANGEBYSCOREfinds nothing with score ≤ now) — this respects priority ordering and allows fast path even when the queue has future-scored messages (e.g. nacked retries with delay) - Environment concurrency has capacity

- Queue concurrency has capacity (including per-concurrency-key limits for CK queues)

When the fast path is taken:

- The message is stored and pushed directly to the worker queue (

RPUSH) - Concurrency slots are claimed (

SADDto the same sets used by the normal dequeue path) - The

processQueueForWorkerQueuejob is not scheduled (no work to do) - TTL sorted set is skipped (the

expireRunworker job handles TTL independently)

When any condition fails, the existing slow path runs unchanged.

Rollout gating

- Development environments: Fast path is always enabled

- Production environments: Gated by a new

enableFastPathboolean onWorkerInstanceGroup(defaults tofalse), allowing region-by-region rollout

Rolling deploy safety

Each process registers its own Lua scripts via defineCommand (identified by SHA hash). Old and new processes never share scripts. The Redis data structures are fully compatible in both directions — ack, nack, and release operations work identically regardless of which path a message took.

Test plan

- Fast path taken when queue is empty and concurrency available

- Slow path when

enableFastPathis false - Slow path when queue has available messages (respects priority ordering)

- Fast path when queue only has future-scored messages

- Slow path when env concurrency is full

- Fast-path message can be acknowledged correctly

- Fast-path message can be nacked and re-enqueued to the queue sorted set

- Run all existing run-queue tests (ack, nack, CK, concurrency sweeper, dequeue) to verify no regressions

- Typecheck passes for run-engine and webapp