A new input streams API enables bidirectional communication, allowing developers to stream real-time data into paused or running task processes to build interactive workflows and human-in-the-loop systems.

“Running tasks now accept real-time data, and noisy neighbors have been evicted from the batch queue.”

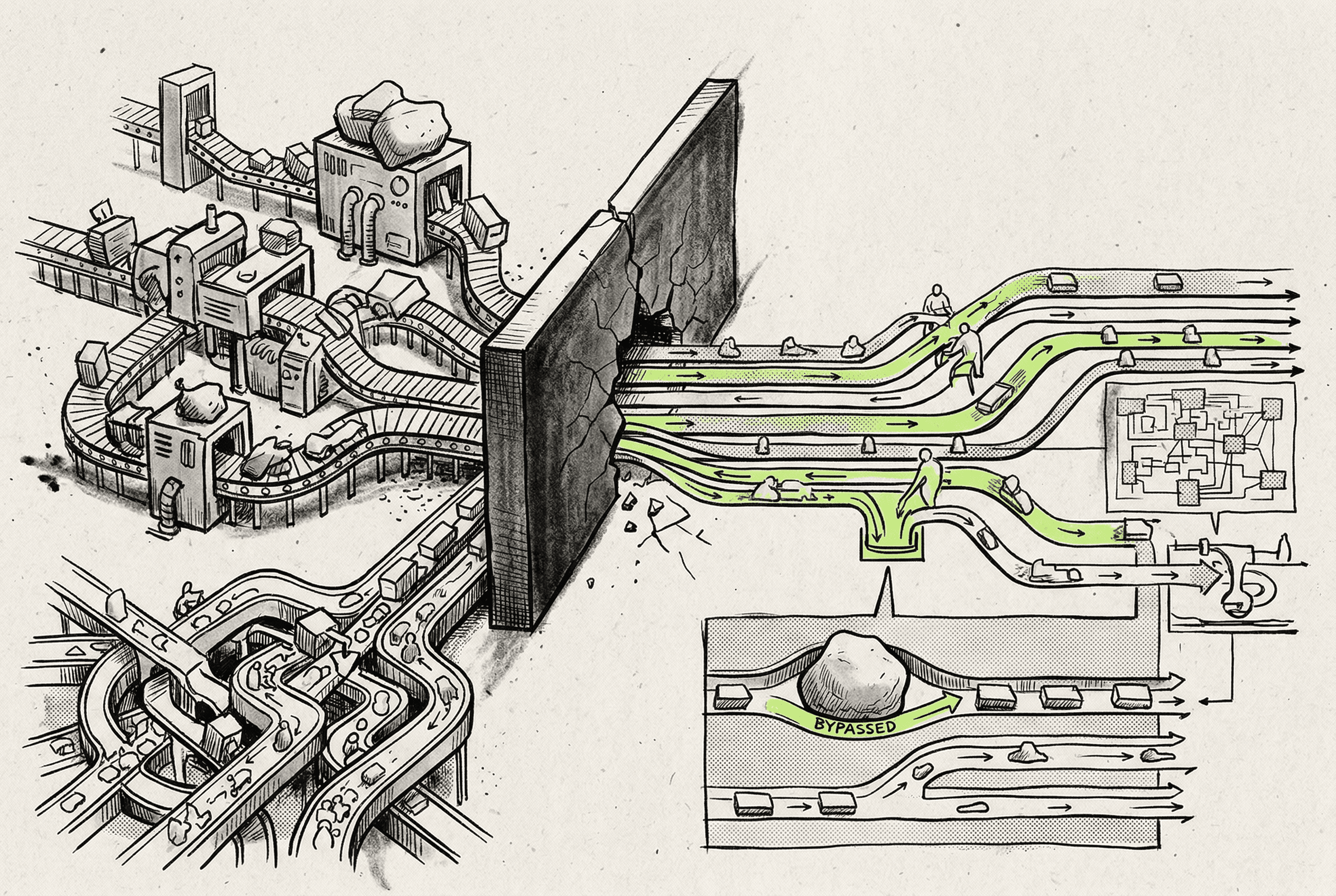

Workflows are no longer strictly fire-and-forget. You can now stream typed data directly into running tasks mid-execution. This allows applications to request user input, wait for manual approvals, or receive live frontend updates without restarting the process.

Batch processing is now strictly isolated, guaranteeing that high-volume users cannot stall operations for others. Furthermore, a single oversized payload will no longer crash an entire batch run; the system simply flags the giant item and processes the rest.

Environment variable synchronization with Vercel now automatically bypasses strict validation errors that previously derailed deployments. We also updated dashboard navigation to use standard industry terminology so your team spends less time hunting for metrics.

A new two-level dispatch architecture guarantees fair queue processing, ensuring high-volume users can no longer stall operations for everyone else.

By breaking a massive root context file into directory-specific instructions and adding an automated CI audit, the repository provides hyper-local guidance to AI coding agents.

- 4A recovery mechanism bypasses strict Vercel SDK schema validation errors, fixing environment variable synchronization for staging and production deployments.bugfix

- 5Large payloads no longer abort entire batch processes. Individual oversized items are now marked as failed while the rest of the batch stream continues normally.feature